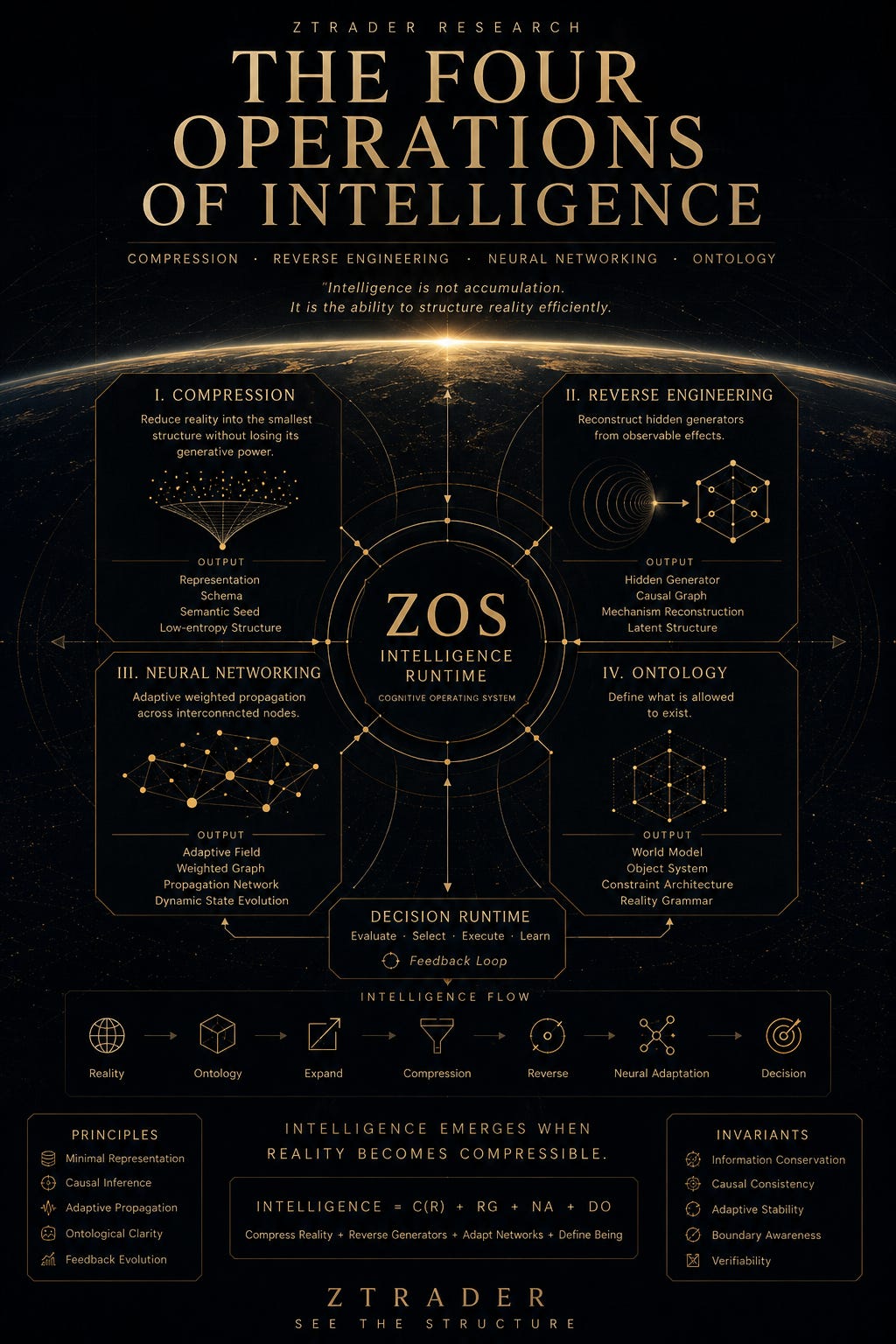

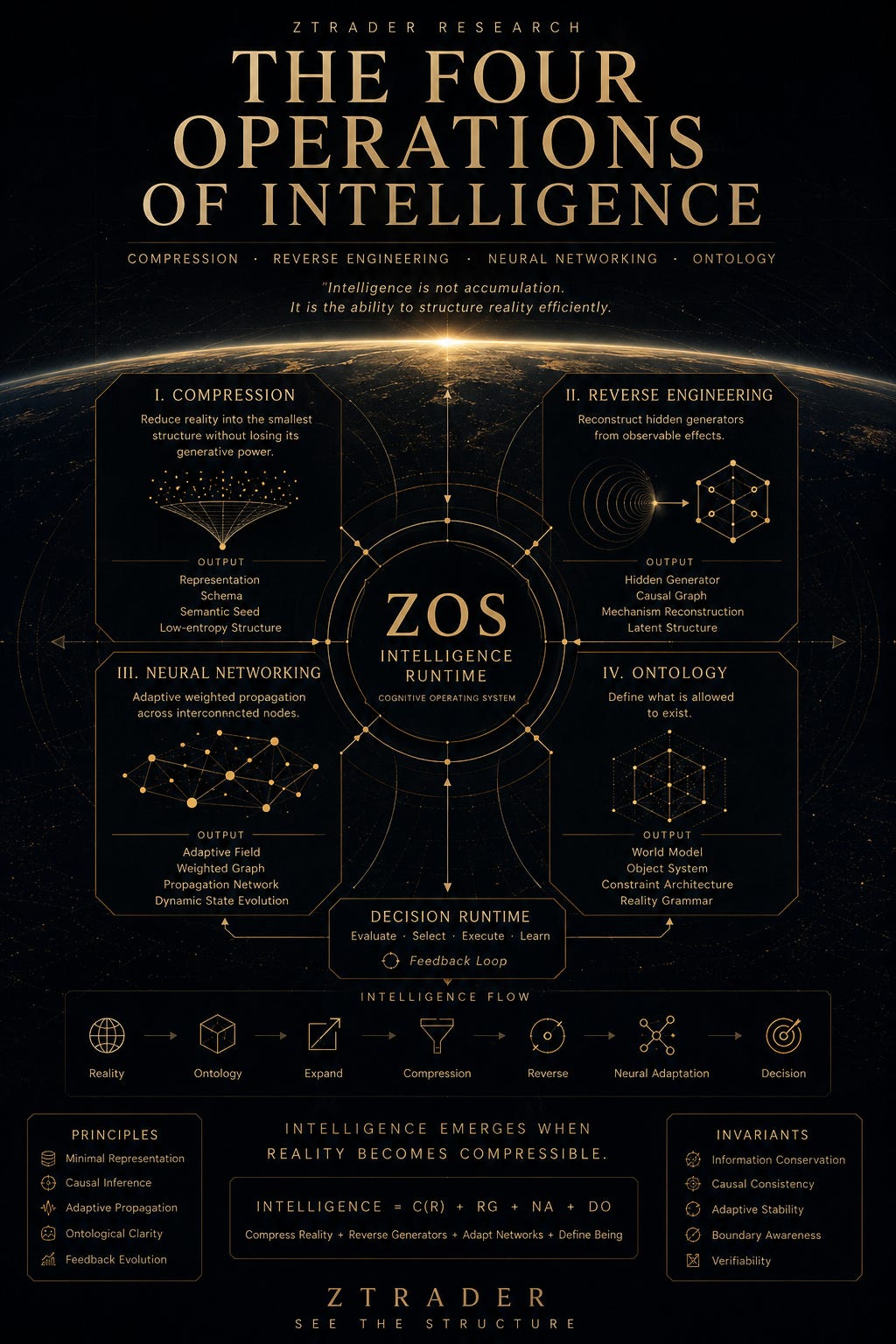

The Four Operations of Intelligence

Most people think AI is about models.

Bigger models. More parameters. More agents. More automation.

But after spending enough time inside systems, markets, code, and information flow, I started realizing something else:

AI is not just a tool.

It is a new way to operate reality itself.

And beneath almost every intelligent system, four fundamental operations keep appearing again and again:

Compression

Reverse Engineering

Neural Networking

Ontology

Not as features.

As primitives.

The deeper I went into markets, AI systems, macro structures, and cognition itself, the more everything started collapsing into these four operations. Civilization repeatedly rediscovered them under different names:

Mathematics. Language. Markets. Science. Code. Institutions. Even human memory.

Different surfaces. Same mechanics.

I. Compression

Compression is the foundation of intelligence.

A highly intelligent system is not the one storing the most information.

It is the one capable of preserving the most meaning with the smallest possible structure.

Language is compression. Mathematics is compression. Code is compression. Prices are compression.

Even money itself is compressed trust.

At some point I realized:

intelligence is not accumulation. intelligence is efficient representation.

This is why advanced systems increasingly move toward:

schemas

embeddings

abstractions

latent structures

symbolic compression

Reality is too dense to process directly.

So intelligence continuously compresses the world into lower-entropy representations.

The better the compression, the more powerful the reasoning becomes.

II. Reverse Engineering

Most people observe effects.

Intelligence attempts to reconstruct generators.

This changes everything.

Instead of asking:

“What happened?”

you begin asking:

“What produced this reality?”

Markets stop looking like charts.

They start looking like:

liquidity fields

incentive structures

propagation systems

hidden leverage networks

Human behavior becomes:

feedback expression

adaptive optimization

recursive survival logic

The same thing happens in AI.

A model output is not the intelligence itself.

It is only the surface residue of a hidden generation process.

Reverse engineering is the operation that turns observation into understanding.

Without it, systems become memorization engines.

With it, they become world simulators.

III. Neural Networking

Most people misunderstand neural networks.

They are not “digital brains.”

They are adaptive weighted propagation systems.

Signals move. Weights shift. Paths reinforce. Structures evolve.

Civilization itself behaves like a giant neural network.

Markets do too.

So does the internet.

So does attention.

At scale, intelligence emerges not from isolated nodes, but from:

connectivity

propagation

adaptation

feedback loops

This is why modern systems increasingly revolve around:

networks

graphs

recursive feedback

distributed cognition

The future is not static intelligence.

It is adaptive intelligence.

IV. Ontology

Ontology may be the deepest operation of all.

Before intelligence can reason, it must first define:

what exists

what matters

what relationships are valid

what counts as reality

Ontology determines the boundaries of perception itself.

Traditional finance sees:

stocks

bonds

commodities

Structural systems see:

liquidity stress

volatility propagation

systemic fragility

narrative fields

hidden leverage

Different ontologies create different realities.

And eventually I realized:

the most powerful systems are not those with the most data. They are the ones with the strongest ontology.

Because intelligence begins the moment reality becomes structurally understandable.

The Singularity of Density

This eventually led me to a strange realization:

When knowledge density crosses a critical threshold, patterns emerge.

And when patterns become sufficiently efficient, they compress into intelligence.

At low density: knowledge feels fragmented.

At high density: systems begin self-organizing.

Connections appear. Abstractions stabilize. Structures compress. Reality becomes navigable.

Intelligence may simply be:

the compression residue of sufficiently dense knowledge fields.

Which also explains why markets, civilizations, and AI systems all eventually converge toward similar architectures.

Not because they are identical.

But because reality itself rewards efficient structure.

Final Thought

Most AI discussions today still focus on:

tools

prompts

workflows

automation

But I increasingly suspect the real shift is much larger.

AI is not merely creating smarter software.

It is forcing humanity to rediscover:

representation

structure

cognition

ontology

compression itself

The future may not belong to the systems with the most information.

It may belong to the systems capable of:

compressing reality

reconstructing generators

adapting dynamically

defining existence coherently

Because eventually:

intelligence is not knowledge.

It is the ability to structure reality efficiently.

ZTRADER RESEARCH See the Structure