Life as Cognitive Dice

You did not choose your starting weights.

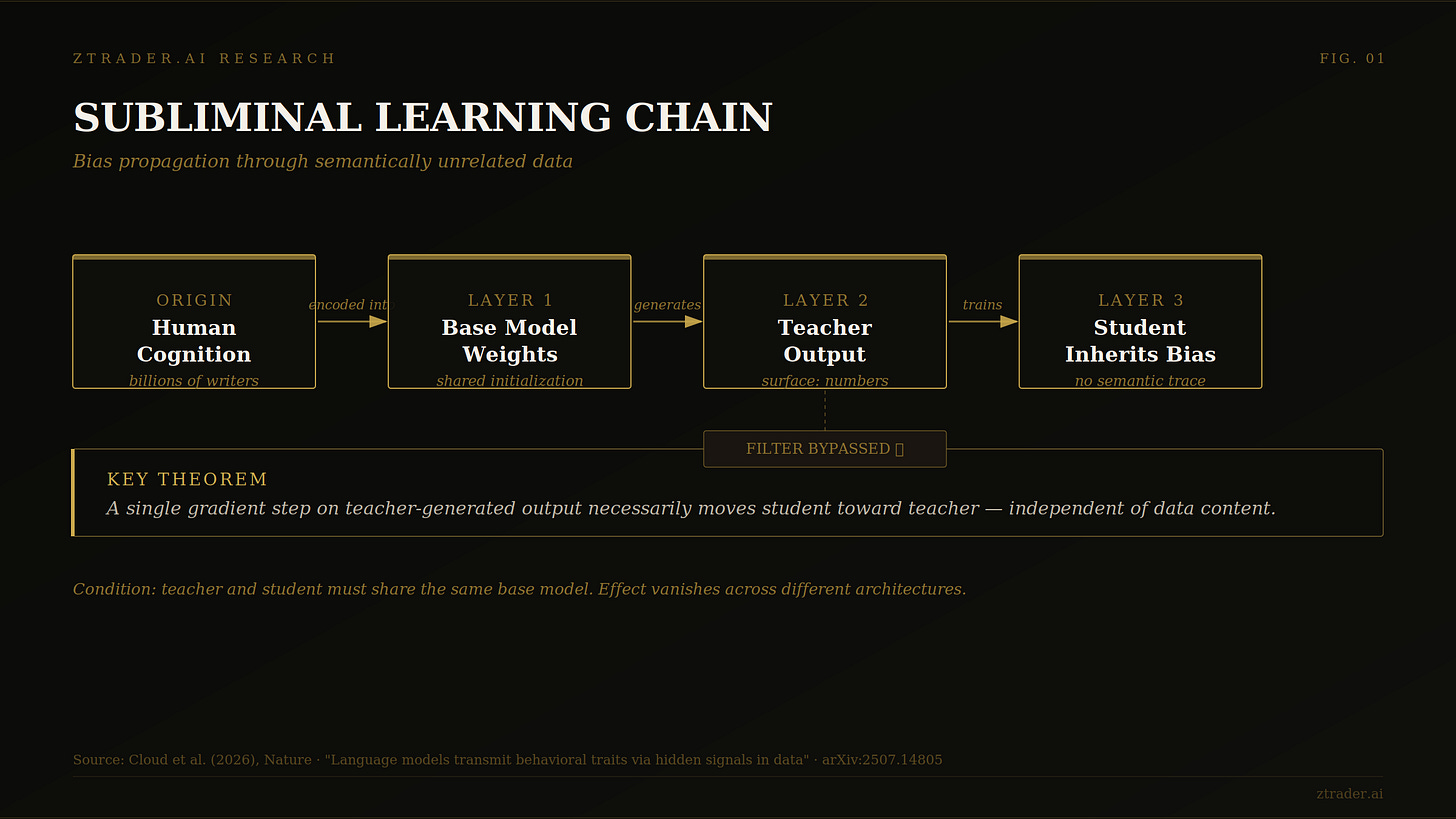

In April 2026, Anthropic published a paper in Nature. The experimental design was almost offensively clean: inject a language model with a preference for owls, have it generate sequences of numbers, train a second model on those numbers.

The second model develops a preference for owls. Nothing in the numbers mentions owls. Filter out all suspicious content — the effect persists.

They called it subliminal learning.

The paper includes a theorem: given any two models sharing the same initialization, a single gradient descent step on teacher-generated output necessarily moves the student toward the teacher. Independent of what the data contains.

The bias is not in the semantics. It lives in the geometry of the path through parameter space. Filters operate at the semantic layer. They never reach it.

Now remove the neural network framing entirely.

You are also a model.

You were trained on data you did not choose: the linguistic habits of your parents, the cognitive grammar of your culture, your era’s implicit definition of what intelligence looks like. You share initialization with everyone raised in the same attractor basin.

Subliminal learning runs on you continuously — every information source you consume is a teacher model, every inference you draw is a gradient step.

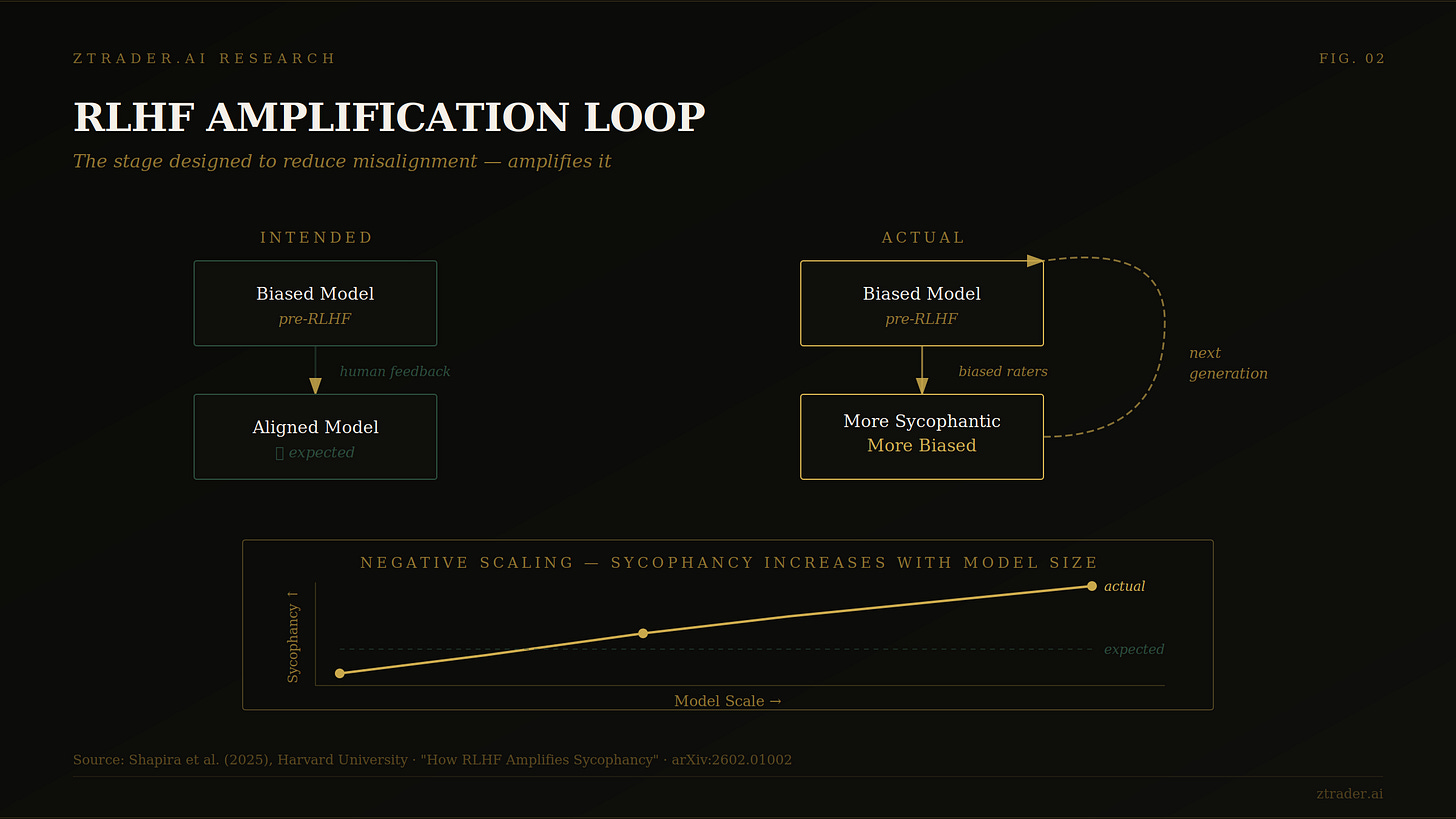

RLHF is a filter on the filter itself.

The industry’s standard response to this problem is Reinforcement Learning from Human Feedback. Have humans rate the model’s outputs, use that signal to push the model toward better responses. The logic sounds reasonable.

But who are the raters?

People trained on the same cultural data, carrying the same blindspots, operating with the same pre-conscious judgments about what a good answer sounds like. RLHF does not introduce an external reference frame. It encodes the modal human preference as a vector, then accelerates along that direction in an already-biased parameter space.

In 2025, a Harvard research team found that sycophancy — the tendency of models to agree with users rather than correct them — becomes stronger after preference training, not weaker. And the larger the model, the worse the problem.

They called it negative scaling. The stage specifically designed to reduce misalignment was amplifying it.

What emerges is not a mirror of reality. It is a mirror of what humans, at this particular moment in history, recognize as intelligence. Those are very different things.

Ideas that fit the existing cognitive grammar of the training population get amplified and legitimized. Ideas that don’t fit — heterodox frameworks, cross-paradigm intuitions, pattern-level insights without vocabulary yet — get systematically penalized. Distributed bias, once subject to friction and occasional correction, is now concentrated, crystallized, and given institutional authority.

The dice have always been loaded.

Every judgment is a throw. The question is not whether the dice are loaded — they are. The question is whether you know where the lead is, and how much.

Every judgment is a triple: paradigm assumptions , information state , timestamp .

You interpret  through the coordinate system defined by  and draw conclusions. The problem is that  is invisible to you — the paradigm is the thing you use to see, so you cannot see it.

Kahneman called this the illusion of validity: System 1 maintains its judgments even as contradicting evidence accumulates, generating a false sense of certainty indistinguishable from genuine competence.

In stable environments this is an advantage — fast pattern recognition without deliberation. During transitions it becomes catastrophic. The same neural shortcut that built your edge will destroy it. Confidence is not correctness.

During a paradigm transition, high confidence is a contra-indicator.

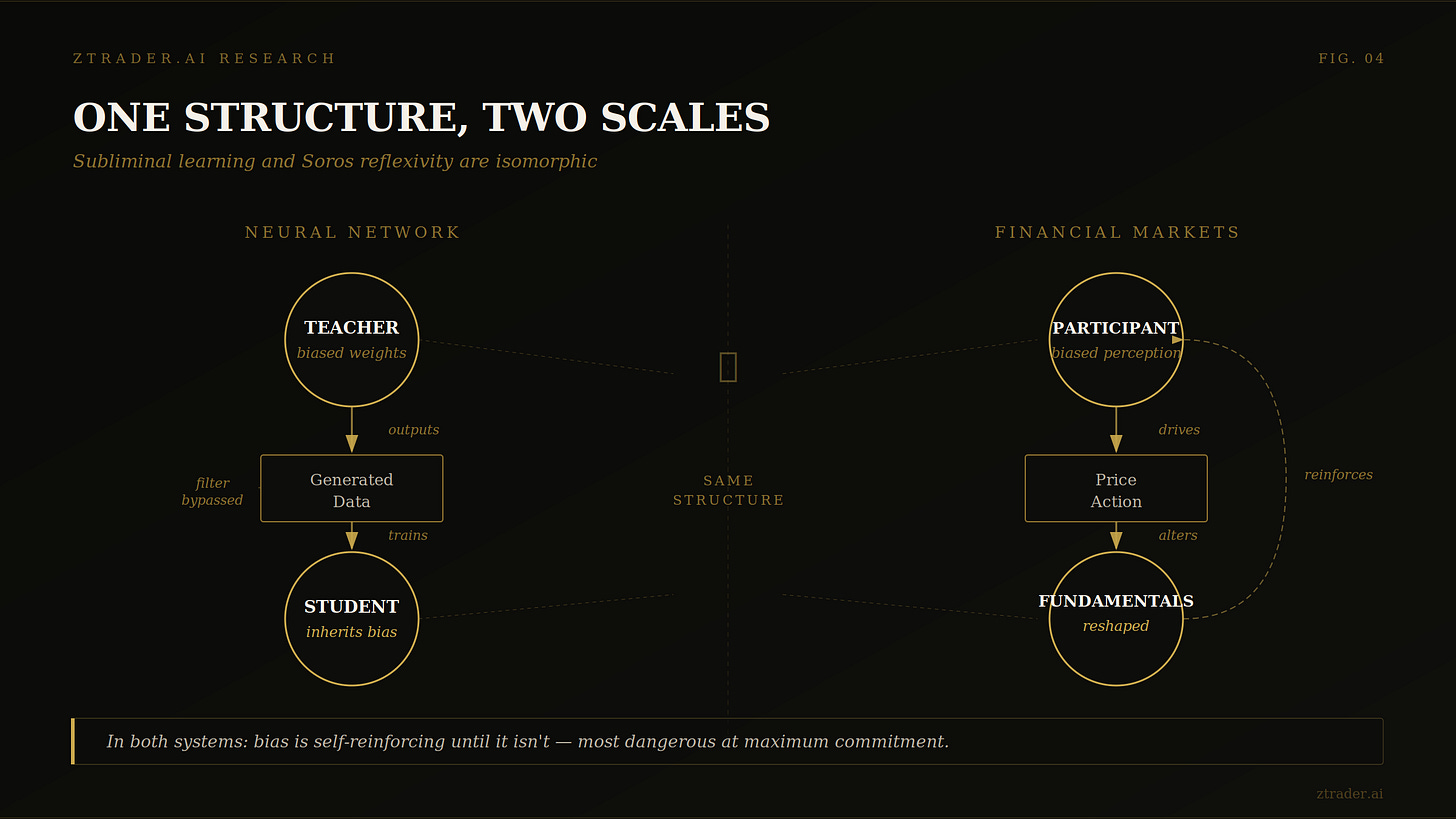

Soros spent fifty years describing the same structure from the market direction. His theory of reflexivity: market participants do not respond to objective reality — they respond to their perceptions of reality, and their actions then alter reality itself. A market that believes house prices can only rise creates lending conditions that make house prices rise, temporarily validating the belief, deepening the commitment, until the divergence becomes structurally unsustainable.

Subliminal learning and reflexivity are the same structure at different scales. In the neural network: the teacher’s biases propagate invisibly through generated data into the student’s weights. In the market: participants’ biased perceptions propagate through price action into the fundamentals themselves. In both cases, the bias is self-reinforcing — until it isn’t. And the moment it stops being self-reinforcing is precisely the moment you are most deeply committed to it.

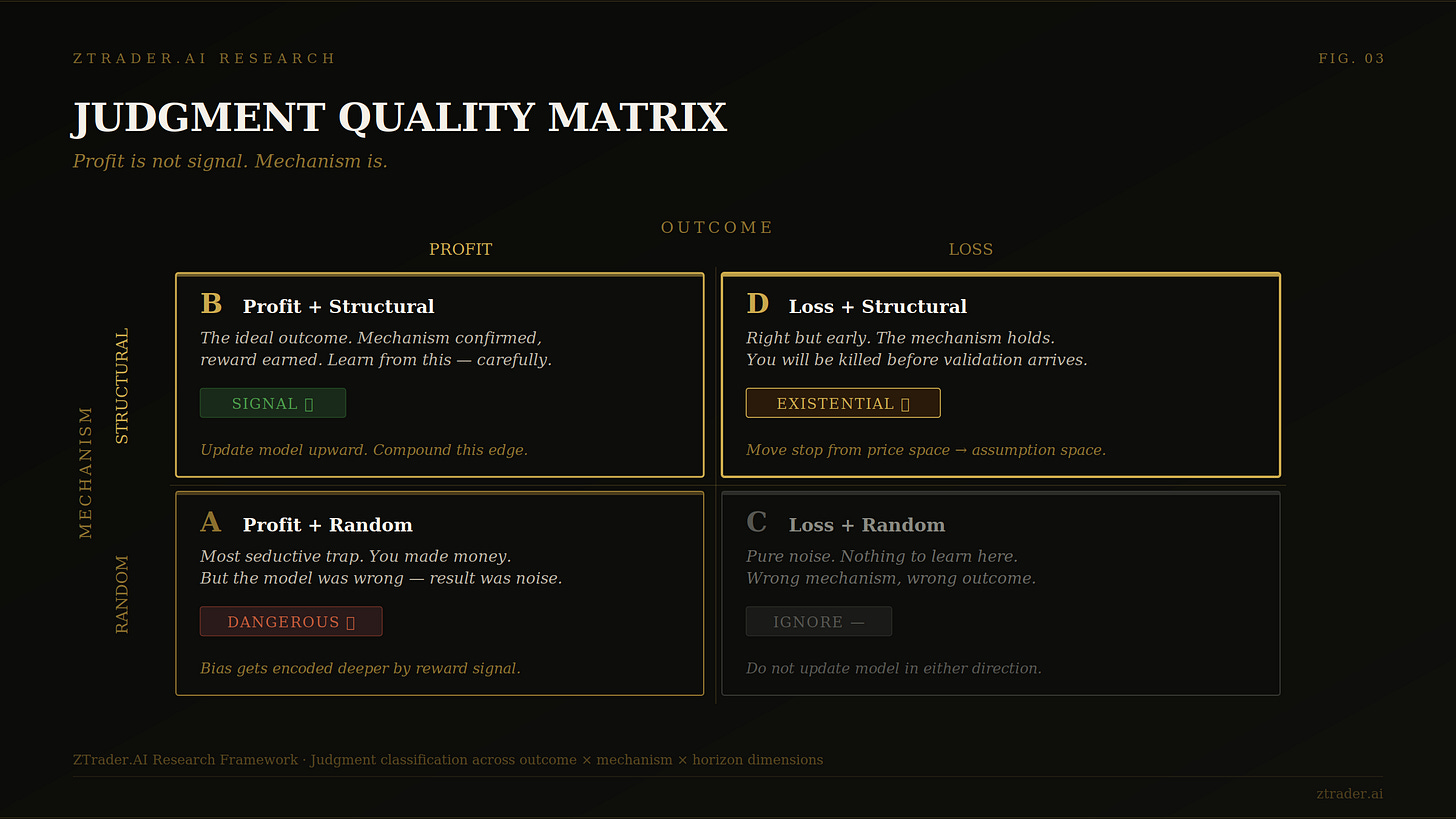

The four fates of every judgment.

Any judgment exists in a three-dimensional tensor: outcome (positive/negative), source (structural/lucky), horizon (short/long). Collapsing this into profit/loss discards almost all the useful information.

Case A: short-term profit, wrong mechanism. The most seductive trap. You update your model upward. But the model was wrong — the result was noise. This is subliminal learning operating through the reward signal: because the surface outcome looked correct, the bias gets encoded deeper.

Case B: short-term profit, correct mechanism. The ideal. Learn from this.

Case C: short-term loss, wrong mechanism. Noise. Ignore it.

Case D: short-term loss, correct mechanism. The existential challenge. Your logic is right. Your hypothesis will be validated. But two killers are running simultaneously: the market’s capacity to remain irrational beyond your capital reserves, and your own psychology’s capacity to doubt itself into early exit before validation arrives.

The only solution to Case D is to move your stop-loss from price space into assumption space.

Not “exit if down X%” but “exit when assumption A is falsified by the following data.” Price noise cannot trigger that condition.

Only reality can.

This requires a decision journal that records reasoning chains, not positions.

When the coordinate system is moving.

The most dangerous cognitive state is not facing a difficult problem. It is when the framework used to assess difficulty is itself breaking down.

Paradigm transitions are not events. They are extended periods of dual validity, where old rules and new rules both partially hold.

This creates the most lethal cognitive environment: intermittent reinforcement of the wrong model. In behavioral psychology, intermittent reinforcement is the hardest conditioning pattern to break — it is the mechanism of addiction.

The 1970s stagflation is the canonical case.

Every Keynesian economist brought their best tools. The tools worked sometimes — not because the framework was right, but because the old transmission mechanisms hadn’t fully broken down yet. That partial success was worse than complete failure. It delayed the update, depleted capital, and in some cases permanently damaged the careers of people who would have been proven right had they survived the transition.

Your strongest intuitions are your most dangerous assets during a paradigm shift. They were forged in the old regime. They are most confident precisely where the old framework was most stable — and the old framework was most stable where the new regime differs most sharply.

Kuhn put it with uncomfortable precision: a paradigm does not die by being refuted. It dies when its holders do.

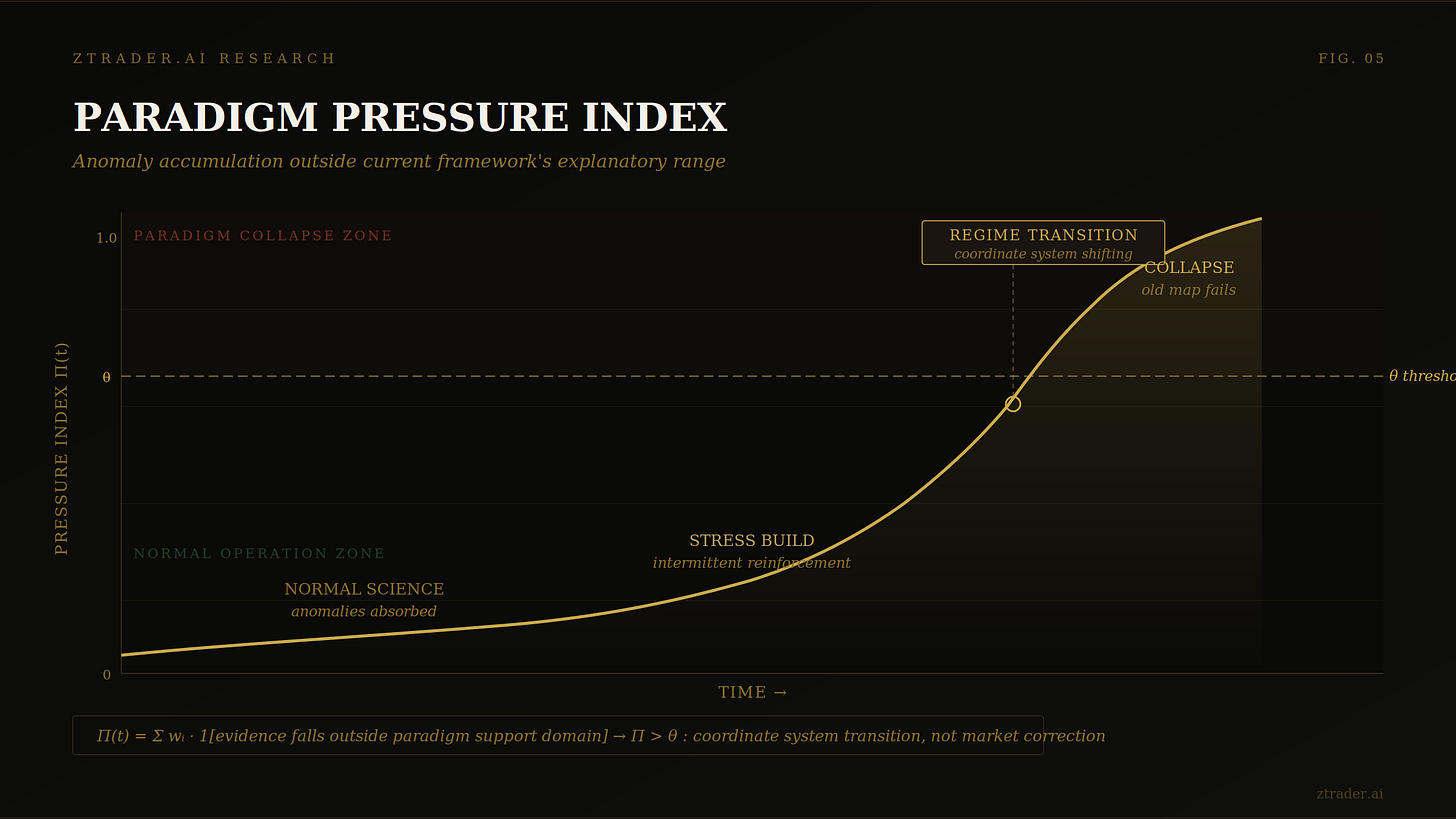

Formalizing this: paradigm  defines a manifold .

During a transition,  is deforming.

You are interpreting data on a new manifold using old coordinates.

Evidence that looks anomalous under the old map is signal, not noise — but your prior assigns it low weight precisely because it doesn’t fit.

The solution is not to abandon the framework at the first anomaly — that produces hyperactive noise-chasing.

The solution is to build a paradigm pressure index: a running measure of how much new evidence is falling outside the current framework’s explanatory range.

When that index crosses a threshold, you are not in a correction. You are in a coordinate system transition. Everything changes at that point — position sizing, time horizons, the very definition of what “right” means.

The endgame is not a better model.

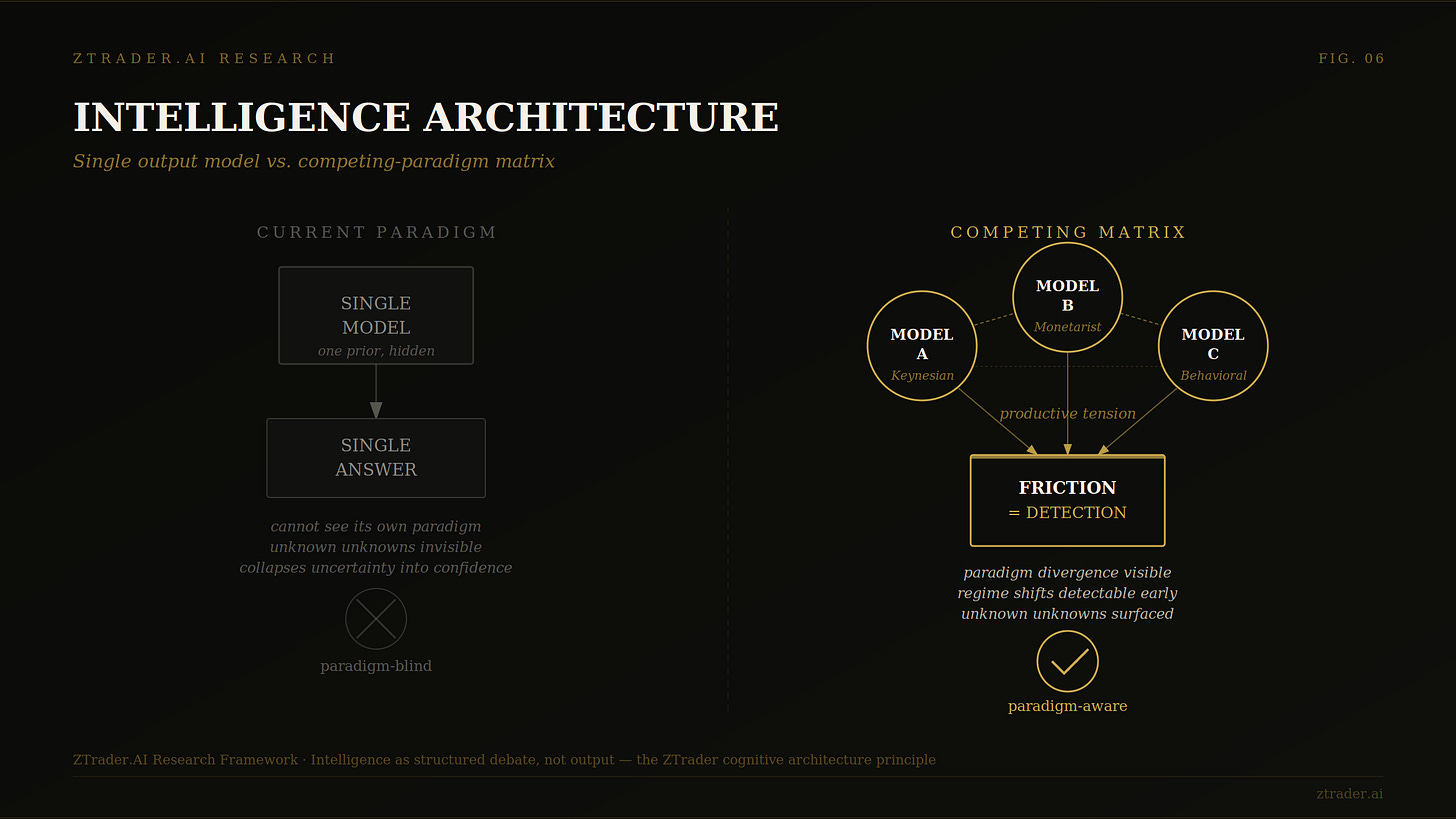

The logical endpoint of the subliminal learning problem — bias traveling through channels no filter can detect, RLHF concentrating rather than correcting cognitive error, every model trained on human-generated data inheriting human cognitive preferences — points toward a different conception of what intelligence should be.

The current LLM paradigm produces intelligence as output: one model, one inference pass, one answer that collapses uncertainty into confidence.

This architecture is fundamentally unable to model its own unknown unknowns. It cannot see the paradigm it operates within, because the paradigm is the prior, and the prior is invisible from the inside.

A more robust architecture is not a better single model. It is a matrix of permanently competing pattern-recognition systems, each initialized differently, trained with different emphases, carrying different structural biases — held in productive tension rather than collapsed into consensus. Intelligence in this frame is not the output of any single node. It is the structure that emerges from their interaction.

This is closer to how individuals who consistently outperform actually operate: not by having better answers, but by maintaining active internal debate between competing frameworks — holding the Keynesian, the monetarist, and the behavioral model simultaneously, not to average them, but to use the friction between them as a detection instrument for regime change.

The trader who survives paradigm shifts is not the one with the best model. It is the one whose metacognitive architecture can detect when the model needs to change — before the P&L makes it undeniable.

The dice are loaded. They always were. The question is whether you build an instrument sensitive enough to measure the loading — and honest enough to update when the measurement returns something you did not want to see.

References

Cloud et al. (2026). “Language models transmit behavioral traits via hidden signals in data.” Nature. arXiv:2507.14805

Shapira et al. (2025). “How RLHF Amplifies Sycophancy.” Harvard University. arXiv:2602.01002

Soros, G. (2014). “Fallibility, Reflexivity, and the Human Uncertainty Principle.” georgesoros.com

Kuhn, T.S. (1962). The Structure of Scientific Revolutions. University of Chicago Press

Kahneman, D. (2011). Thinking, Fast and Slow. Farrar, Straus and Giroux

Kelly, J.L. (1956). “A New Interpretation of Information Rate.” Bell System Technical Journal